AI: is there a middle ground?

My thoughts after using AI seriously for a year

This post has been brewing in the back of my head for a little while and I think it's time to commit this to (virtual) paper. I've been having some spicy thoughts about AI, and not the ones I thought I would have when I started using it a few years ago. I'm seeing the pros and cons of it all and figured I'd write a bit of a post both to clarify and summarise my own thoughts and feelings and to see whether it tracks with the rest of you.

How it started

I've shared on this blog before that I started off a staunch AI skeptic, and that's true. I tried Copilot a few years ago, didn't see what the fuss was about and pretty much immediately discounted AI as a serious tool. I'd studied neural nets and LLMs at university and thought to myself "it's all just maths and weights and averages, how on earth can this help me code?" I didn't realise it at the time, but I had really only scratched the surface of what AI could do - I was using Copilot as a fancy autocomplete, which is probably where most of us start.

On top of that, I figured that the moral downsides far outweighed the upsides of using AI in my work - you know, the fact that companies are basically stealing our data to feed to their LLMs, use of AI is disrupting a lot of people's livelihoods and last but not least, destroying the only planet we have. This honestly really bugged me and we had serious conversations on my team at the time where we were being highly encouraged to use AI but a lot of us were simply not comfortable doing so for those reasons.

So what changed?

My journey with AI

In the intervening period, AI has become far more commonplace in tech and has become a standard part of a developer's toolkit. It didn't start off that way for me and it was a pretty long journey from deciding AI "wasn't for me" to coming around to it and seeing the usefulness of it.

My new role has had a lot to do with it if I'm honest. Starting a new job is an opportunity to try new things and figure stuff out and my new team has been playing and experimenting quite a bit of with AI. I discovered agent mode for the first time (I'd been using ask mode like some kind of luddite before that) and finally saw what AI agents could do with the help of agent files, skills and better prompting. I started off with Copilot, had a brief flirtation with Cursor and decided I didn't like it, tried Claude in the terminal and finally settled on using VS Code's Claude Code extension. I think this is where I'm going to stay, but who knows - new tools are coming out all the time!

A few months back, my team did a whole migration from Enzyme to React Testing Library with the help of AI and although it made a bunch of mistakes and managed to somehow always do some things in unconventional ways (despite us literally telling it our conventions!), it still saved us a bunch of time and effort in the long run and made an arduous migration (I have done an Enzyme to React Testing Library manually before so I know) a lot quicker.

So yeah, I'm no longer a staunch skeptic, can you believe it?

How it's going

Instead, I'm fully in the "this is brilliant, why didn't I use this before, how can I use this for everything?!" phase of adopting AI and I'm noticing some down sides that I wanted to chat about.

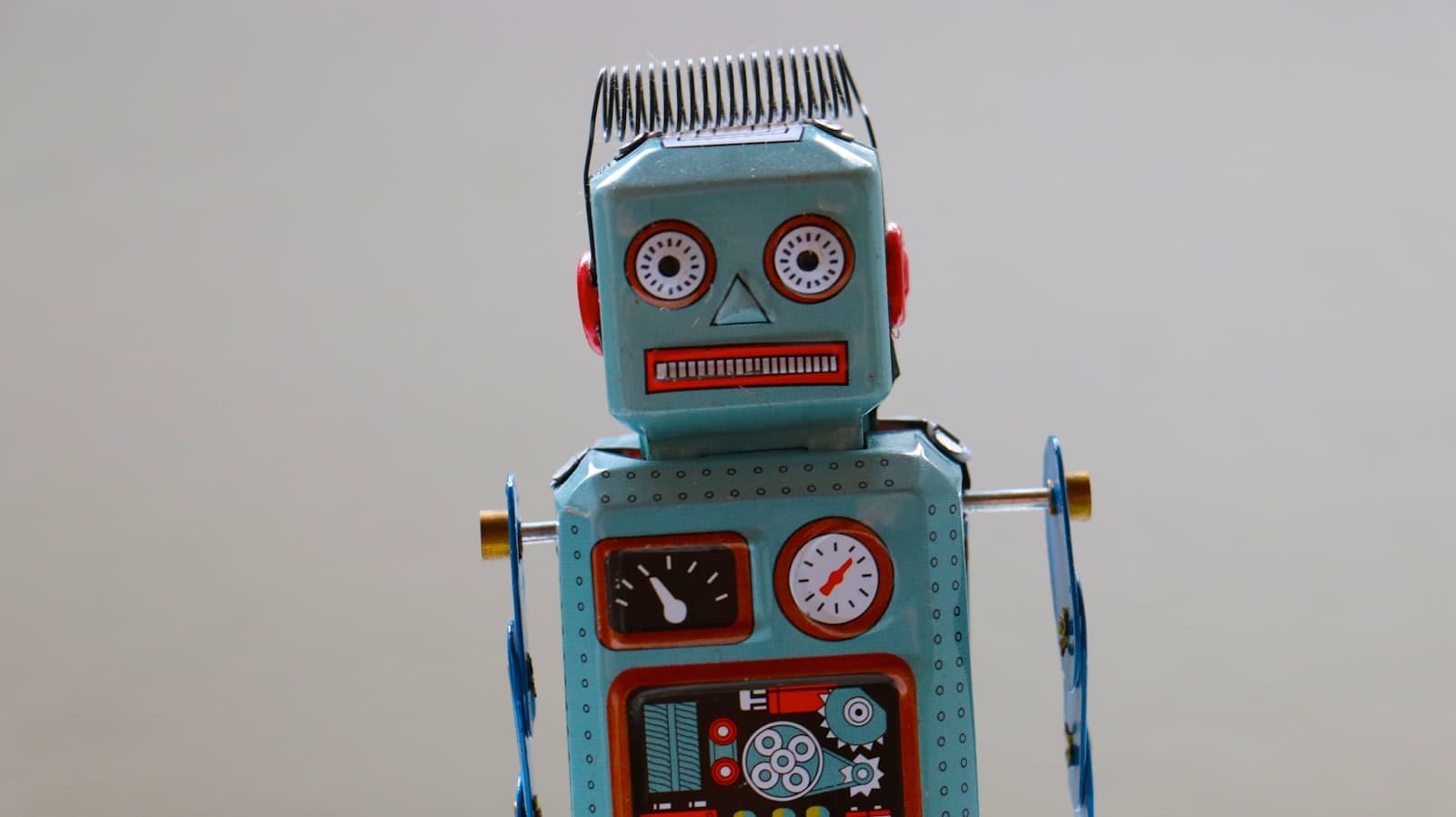

I'm finding myself using AI as a productivity lever - I can "be more productive" by sending AI off to do certain things while I work on something else and that is kind of addictive, but also not good for my brain. It's very cool that an AI agent can go away and build a UI component and write a bunch of tests for it with minimal input from me. However, this means I'm not doing that stuff - you know, the fun stuff? Instead I'm babysitting this overenthusiastic, sycophantic robot developer who despite having the whole internet at its disposal is somehow producing code at a level that is more junior than me and can't even do things the same way twice. All this while I'm also trying to write documentation, add tickets to our boards and anything else the AI can't do (yet).

In other words, I am spreading myself very thin and I'm not enjoying it. What started off as "isn't it cool that AI can do this?!" has become "guess I'll use AI to move as quickly as possible" (please note that nobody has told me to do this, this is a thing I've done to myself) and I'm not sure that's healthy. It's a level of productivity that will surely lead to burnout - I'm already feeling much more tired than I was a few months ago when I was using AI significantly less.

I'm also worried I'm going to stop learning and start forgetting. With Claude doing a lot of the heavy lifting, I'm no longer really thinking about the code. I'm just telling it what to do and reviewing what it's done to make sure it hasn't done anything ridiculous. I worry that as a result, code quality and architecture is going to suffer. Just because Claude is doing something in a certain way and it works, doesn't necessarily mean it's the best way to do something. I'm finding that I'm using those mental muscles less and less and offloading more of the thinking to Claude and I don't like that at all. As someone who has spent a long time honing those coding and architecture skills, I don't want to lose them.

And then there's obviously the moral shadiness of it all. AI's moral down sides haven't gone away and it's expensive to boot - someone shared our monthly bill on Slack recently and I was fully like "how much?!" So I'm not sure how comfortable I feel continuing to use it as much as I have lately. In a world that is very much encouraging us to lean on AI though, it's hard to say no sometimes. Luckily I'm fortunate enough to be working in a culture where it is acceptable not to use it if you don't want to, but not everyone gets that option these days.

Having become aware of all of these things, I want to try and make a bit of a plan going forwards, so I can still use AI (because I don't think the genie is going back in that bottle) while still retaining my sanity, my skills and my moral compass.

Full vibe coding kind of scares me...

Just as a quick aside, I had an experience just the other day that also informed this whole conversation: I fully vibe coded a Next.js app router version of this blog. As you may have noticed, Hashnode have been changing things around here. The layout of my blog is different and I didn't ask for that! The UI to manage the blog has also changed (not for the better) and it made me wonder "how hard would it be to use the exported markdown files from Hashnode and create a simple statically generated version of this blog in Next.js to host on Vercel?"

Turns out, very easy, especially if you use AI! I only have Copilot on my personal machine so I used that instead of Claude, but within an hour, it had a respectable looking Next.js app up and running (I've even put it up on Vercel). The thing that gave me pause is that I realised "I have no idea how any of this works" and that scared the heck out of me. In this case, the site is statically generated so there is very little that could go wrong in terms of security, but what if I were building something interactive?

So yeah, fully vibe coding something is not for me. I have since checked the code and it does seem fine (I understand it at least) and maybe I'll switch to this new version at some point? But I'd like to make it more my own first. The whole experience really made me think about how much less I'd enjoy my job if it was just managing agents and not doing any coding of my own - even at my most heavy periods of AI use, I'm still writing at least some code myself.

My plan for the future

Anyway, given all of that, how do we as developers leverage AI and still get to enjoy our jobs? It must be possible, surely?

Personally, I am going to try and shift my approach from Claude being the main developer and me being its reviewer and sidekick to the other way around. Like switching drivers in a pairing session, I guess? I will go back to writing code (and yes, tests) and only when I get stuck or need a second pair of eyes will I involve the AI. Even then, maybe I'll have a go at trying to research options for myself, though doing that without AI is tricky in itself these days, given the state of Google search.

What will this look like, you ask? It means making a conscious effort to slow down. To pause before launching straight into development and think "what do I need to do?" Something I used to do frequently before AI got involved - now I just craft a prompt and send it on its way. Once I've got a plan, I can start working on writing the code needed to execute that plan and at that point, maybe Claude can give me a hand. It also means not going "I'm too tired" or "it's just easier to let Claude do this" - it can be so tempting to just let the AI do the work and that needs to stop.

I'd like to use it less for writing entire UI components or test suites and more for things like "are there any accessibility concerns you can see with this component?" or "can you spot any edge cases I've missed?" I am also going to set pomodoro timers and focus on one thing - it's the best way I've found to make sure I don't try and do five things at once and honestly, part of the problem here is me. I'm allowing myself to be spread too thin, AI is just making it easier to do so. I honestly don't know whether this approach will work yet, because I haven't tried it, I've literally come up with this now. Maybe I can do a follow-up in six months and let you know!

I would love to know if you have any tips for how to walk this new rather futuristic line.